IncrementalPCA#

- class sklearn.decomposition.IncrementalPCA(n_components=None, *, whiten=False, copy=True, batch_size=None)[source]#

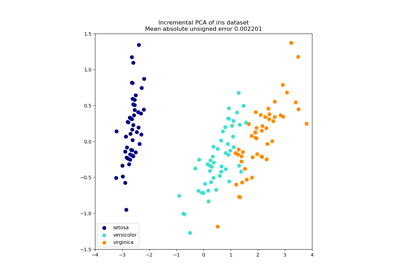

Incremental principal components analysis (IPCA).

Linear dimensionality reduction using Singular Value Decomposition of the data, keeping only the most significant singular vectors to project the data to a lower dimensional space. The input data is centered but not scaled for each feature before applying the SVD.

Depending on the size of the input data, this algorithm can be much more memory efficient than a PCA, and allows sparse input.

This algorithm has constant memory complexity, on the order of

batch_size * n_features, enabling use of np.memmap files without loading the entire file into memory. For sparse matrices, the input is converted to dense in batches (in order to be able to subtract the mean) which avoids storing the entire dense matrix at any one time.The computational overhead of each SVD is

O(batch_size * n_features ** 2), but only 2 * batch_size samples remain in memory at a time. There will ben_samples / batch_sizeSVD computations to get the principal components, versus 1 large SVD of complexityO(n_samples * n_features ** 2)for PCA.For a usage example, see Incremental PCA.

Read more in the User Guide.

Added in version 0.16.

- Parameters:

- n_componentsint, default=None

Number of components to keep. If

n_componentsisNone, thenn_componentsis set tomin(n_samples, n_features).- whitenbool, default=False

When True (False by default) the

components_vectors are divided byn_samplestimescomponents_to ensure uncorrelated outputs with unit component-wise variances.Whitening will remove some information from the transformed signal (the relative variance scales of the components) but can sometimes improve the predictive accuracy of the downstream estimators by making data respect some hard-wired assumptions.

- copybool, default=True

If False, X will be overwritten.

copy=Falsecan be used to save memory but is unsafe for general use.- batch_sizeint, default=None

The number of samples to use for each batch. Only used when calling

fit. Ifbatch_sizeisNone, thenbatch_sizeis inferred from the data and set to5 * n_features, to provide a balance between approximation accuracy and memory consumption.

- Attributes:

- components_ndarray of shape (n_components, n_features)

Principal axes in feature space, representing the directions of maximum variance in the data. Equivalently, the right singular vectors of the centered input data, parallel to its eigenvectors. The components are sorted by decreasing

explained_variance_.- explained_variance_ndarray of shape (n_components,)

Variance explained by each of the selected components.

- explained_variance_ratio_ndarray of shape (n_components,)

Percentage of variance explained by each of the selected components. If all components are stored, the sum of explained variances is equal to 1.0.

- singular_values_ndarray of shape (n_components,)

The singular values corresponding to each of the selected components. The singular values are equal to the 2-norms of the

n_componentsvariables in the lower-dimensional space.- mean_ndarray of shape (n_features,)

Per-feature empirical mean, aggregate over calls to

partial_fit.- var_ndarray of shape (n_features,)

Per-feature empirical variance, aggregate over calls to

partial_fit.- noise_variance_float

The estimated noise covariance following the Probabilistic PCA model from Tipping and Bishop 1999. See “Pattern Recognition and Machine Learning” by C. Bishop, 12.2.1 p. 574 or http://www.miketipping.com/papers/met-mppca.pdf.

- n_components_int

The estimated number of components. Relevant when

n_components=None.- n_samples_seen_int

The number of samples processed by the estimator. Will be reset on new calls to fit, but increments across

partial_fitcalls.- batch_size_int

Inferred batch size from

batch_size.- n_features_in_int

Number of features seen during fit.

Added in version 0.24.

- feature_names_in_ndarray of shape (

n_features_in_,) Names of features seen during fit. Defined only when

Xhas feature names that are all strings.Added in version 1.0.

See also

PCAPrincipal component analysis (PCA).

KernelPCAKernel Principal component analysis (KPCA).

SparsePCASparse Principal Components Analysis (SparsePCA).

TruncatedSVDDimensionality reduction using truncated SVD.

Notes

Implements the incremental PCA model from Ross et al. (2008) [1]. This model is an extension of the Sequential Karhunen-Loeve Transform from Levy and Lindenbaum (2000) [2].

We have specifically abstained from an optimization used by authors of both papers, a QR decomposition used in specific situations to reduce the algorithmic complexity of the SVD. The source for this technique is Matrix Computations (Golub and Van Loan 1997 [3]). This technique has been omitted because it is advantageous only when decomposing a matrix with

n_samples(rows) >= 5/3 *n_features(columns), and hurts the readability of the implemented algorithm. This would be a good opportunity for future optimization, if it is deemed necessary.References

[1]D. Ross, J. Lim, R. Lin, M. Yang. Incremental Learning for Robust Visual Tracking, International Journal of Computer Vision, Volume 77, Issue 1-3, pp. 125-141, May 2008. https://www.cs.toronto.edu/~dross/ivt/RossLimLinYang_ijcv.pdf

[2][3]G. Golub and C. Van Loan. Matrix Computations, Third Edition, Chapter 5, Section 5.4.4, pp. 252-253, 1997.

Examples

>>> from sklearn.datasets import load_digits >>> from sklearn.decomposition import IncrementalPCA >>> from scipy import sparse >>> X, _ = load_digits(return_X_y=True) >>> transformer = IncrementalPCA(n_components=7, batch_size=200) >>> # either partially fit on smaller batches of data >>> transformer.partial_fit(X[:100, :]) IncrementalPCA(batch_size=200, n_components=7) >>> # or let the fit function itself divide the data into batches >>> X_sparse = sparse.csr_array(X) >>> X_transformed = transformer.fit_transform(X_sparse) >>> X_transformed.shape (1797, 7)

- fit(X, y=None)[source]#

Fit the model with X, using minibatches of size batch_size.

- Parameters:

- X{array-like, sparse matrix} of shape (n_samples, n_features)

Training data, where

n_samplesis the number of samples andn_featuresis the number of features.- yIgnored

Not used, present for API consistency by convention.

- Returns:

- selfobject

Returns the instance itself.

- fit_transform(X, y=None, **fit_params)[source]#

Fit to data, then transform it.

Fits transformer to

Xandywith optional parametersfit_paramsand returns a transformed version ofX.- Parameters:

- Xarray-like of shape (n_samples, n_features)

Input samples.

- yarray-like of shape (n_samples,) or (n_samples, n_outputs), default=None

Target values (None for unsupervised transformations).

- **fit_paramsdict

Additional fit parameters. Pass only if the estimator accepts additional params in its

fitmethod.

- Returns:

- X_newndarray array of shape (n_samples, n_features_new)

Transformed array.

- get_covariance()[source]#

Compute data covariance with the generative model.

cov = components_.T * S**2 * components_ + sigma2 * eye(n_features)where S**2 contains the explained variances, and sigma2 contains the noise variances.- Returns:

- covarray of shape=(n_features, n_features)

Estimated covariance of data.

- get_feature_names_out(input_features=None)[source]#

Get output feature names for transformation.

The feature names out will prefixed by the lowercased class name. For example, if the transformer outputs 3 features, then the feature names out are:

["class_name0", "class_name1", "class_name2"].- Parameters:

- input_featuresarray-like of str or None, default=None

Only used to validate feature names with the names seen in

fit.

- Returns:

- feature_names_outndarray of str objects

Transformed feature names.

- get_metadata_routing()[source]#

Get metadata routing of this object.

Please check User Guide on how the routing mechanism works.

- Returns:

- routingMetadataRequest

A

MetadataRequestencapsulating routing information.

- get_params(deep=True)[source]#

Get parameters for this estimator.

- Parameters:

- deepbool, default=True

If True, will return the parameters for this estimator and contained subobjects that are estimators.

- Returns:

- paramsdict

Parameter names mapped to their values.

- get_precision()[source]#

Compute data precision matrix with the generative model.

Equals the inverse of the covariance but computed with the matrix inversion lemma for efficiency.

- Returns:

- precisionarray, shape=(n_features, n_features)

Estimated precision of data.

- inverse_transform(X)[source]#

Transform data back to its original space.

In other words, return an input

X_originalwhose transform would be X.- Parameters:

- Xarray-like of shape (n_samples, n_components)

New data, where

n_samplesis the number of samples andn_componentsis the number of components.

- Returns:

- X_originalarray-like of shape (n_samples, n_features)

Original data, where

n_samplesis the number of samples andn_featuresis the number of features.

Notes

If whitening is enabled, inverse_transform will compute the exact inverse operation, which includes reversing whitening.

- partial_fit(X, y=None, check_input=True)[source]#

Incremental fit with X. All of X is processed as a single batch.

- Parameters:

- Xarray-like of shape (n_samples, n_features)

Training data, where

n_samplesis the number of samples andn_featuresis the number of features.- yIgnored

Not used, present for API consistency by convention.

- check_inputbool, default=True

Run check_array on X.

- Returns:

- selfobject

Returns the instance itself.

- set_output(*, transform=None)[source]#

Set output container.

Refer to the user guide for more details and Introducing the set_output API for an example on how to use the API.

- Parameters:

- transform{“default”, “pandas”, “polars”}, default=None

Configure output of

transformandfit_transform."default": Default output format of a transformer"pandas": DataFrame output"polars": Polars outputNone: Transform configuration is unchanged

Added in version 1.4:

"polars"option was added.

- Returns:

- selfestimator instance

Estimator instance.

- set_params(**params)[source]#

Set the parameters of this estimator.

The method works on simple estimators as well as on nested objects (such as

Pipeline). The latter have parameters of the form<component>__<parameter>so that it’s possible to update each component of a nested object.- Parameters:

- **paramsdict

Estimator parameters.

- Returns:

- selfestimator instance

Estimator instance.

- transform(X)[source]#

Apply dimensionality reduction to X.

X is projected on the first principal components previously extracted from a training set, using minibatches of size batch_size if X is sparse.

- Parameters:

- X{array-like, sparse matrix} of shape (n_samples, n_features)

New data, where

n_samplesis the number of samples andn_featuresis the number of features.

- Returns:

- X_newndarray of shape (n_samples, n_components)

Projection of X in the first principal components.

Examples

>>> import numpy as np >>> from sklearn.decomposition import IncrementalPCA >>> X = np.array([[-1, -1], [-2, -1], [-3, -2], ... [1, 1], [2, 1], [3, 2]]) >>> ipca = IncrementalPCA(n_components=2, batch_size=3) >>> ipca.fit(X) IncrementalPCA(batch_size=3, n_components=2) >>> ipca.transform(X)