learning_curve#

- sklearn.model_selection.learning_curve(estimator, X, y, *, groups=None, train_sizes=array([0.1, 0.33, 0.55, 0.78, 1.]), cv=None, scoring=None, exploit_incremental_learning=False, n_jobs=None, pre_dispatch='all', verbose=0, shuffle=False, random_state=None, error_score=nan, return_times=False, params=None)[source]#

Learning curve.

Determines cross-validated training and test scores for different training set sizes.

A cross-validation generator splits the whole dataset k times in training and test data. Subsets of the training set with varying sizes will be used to train the estimator and a score for each training subset size and the test set will be computed. Afterwards, the scores will be averaged over all k runs for each training subset size.

Read more in the User Guide.

- Parameters:

- estimatorobject type that implements the “fit” method

An object of that type which is cloned for each validation. It must also implement “predict” unless

scoringis a callable that doesn’t rely on “predict” to compute a score.- X{array-like, sparse matrix} of shape (n_samples, n_features)

Training vector, where

n_samplesis the number of samples andn_featuresis the number of features.- yarray-like of shape (n_samples,) or (n_samples, n_outputs) or None

Target relative to X for classification or regression; None for unsupervised learning.

- groupsarray-like of shape (n_samples,), default=None

Group labels for the samples used while splitting the dataset into train/test set. Only used in conjunction with a “Group” cv instance (e.g.,

GroupKFold).Changed in version 1.6:

groupscan only be passed if metadata routing is not enabled viasklearn.set_config(enable_metadata_routing=True). When routing is enabled, passgroupsalongside other metadata via theparamsargument instead. E.g.:learning_curve(..., params={'groups': groups}).- train_sizesarray-like of shape (n_ticks,), default=np.linspace(0.1, 1.0, 5)

Relative or absolute numbers of training examples that will be used to generate the learning curve. If the dtype is float, it is regarded as a fraction of the maximum size of the training set (that is determined by the selected validation method), i.e. it has to be within (0, 1]. Otherwise it is interpreted as absolute sizes of the training sets. Note that for classification the number of samples usually has to be big enough to contain at least one sample from each class.

- cvint, cross-validation generator or an iterable, default=None

Determines the cross-validation splitting strategy. Possible inputs for cv are:

None, to use the default 5-fold cross validation,

int, to specify the number of folds in a

(Stratified)KFold,an iterable yielding (train, test) splits as arrays of indices.

For int/None inputs, if the estimator is a classifier and

yis either binary or multiclass,StratifiedKFoldis used. In all other cases,KFoldis used. These splitters are instantiated withshuffle=Falseso the splits will be the same across calls.Refer User Guide for the various cross-validation strategies that can be used here.

Changed in version 0.22:

cvdefault value if None changed from 3-fold to 5-fold.- scoringstr or callable, default=None

Scoring method to use to evaluate the training and test sets.

str: see String name scorers for options.

callable: a scorer callable object (e.g., function) with signature

scorer(estimator, X, y). See Callable scorers for details.None: theestimator’s default evaluation criterion is used.

- exploit_incremental_learningbool, default=False

If the estimator supports incremental learning, this will be used to speed up fitting for different training set sizes.

- n_jobsint, default=None

Number of jobs to run in parallel. Training the estimator and computing the score are parallelized over the different training and test sets.

Nonemeans 1 unless in ajoblib.parallel_backendcontext.-1means using all processors. See Glossary for more details.- pre_dispatchint or str, default=’all’

Number of predispatched jobs for parallel execution (default is all). The option can reduce the allocated memory. The str can be an expression like ‘2*n_jobs’.

- verboseint, default=0

Controls the verbosity: the higher, the more messages.

- shufflebool, default=False

Whether to shuffle training data before taking prefixes of it based on``train_sizes``.

- random_stateint, RandomState instance or None, default=None

Used when

shuffleis True. Pass an int for reproducible output across multiple function calls. See Glossary.- error_score‘raise’ or numeric, default=np.nan

Value to assign to the score if an error occurs in estimator fitting. If set to ‘raise’, the error is raised. If a numeric value is given, FitFailedWarning is raised.

Added in version 0.20.

- return_timesbool, default=False

Whether to return the fit and score times.

- paramsdict, default=None

Parameters to pass to the

fitmethod of the estimator and to the scorer.If

enable_metadata_routing=False(default): Parameters directly passed to thefitmethod of the estimator.If

enable_metadata_routing=True: Parameters safely routed to thefitmethod of the estimator. See Metadata Routing User Guide for more details.

Added in version 1.6.

- Returns:

- train_sizes_absarray of shape (n_unique_ticks,)

Numbers of training examples that has been used to generate the learning curve. Note that the number of ticks might be less than n_ticks because duplicate entries will be removed.

- train_scoresarray of shape (n_ticks, n_cv_folds)

Scores on training sets.

- test_scoresarray of shape (n_ticks, n_cv_folds)

Scores on test set.

- fit_timesarray of shape (n_ticks, n_cv_folds)

Times spent for fitting in seconds. Only present if

return_timesis True.- score_timesarray of shape (n_ticks, n_cv_folds)

Times spent for scoring in seconds. Only present if

return_timesis True.

See also

LearningCurveDisplay.from_estimatorPlot a learning curve using an estimator and data.

Examples

>>> from sklearn.datasets import make_classification >>> from sklearn.tree import DecisionTreeClassifier >>> from sklearn.model_selection import learning_curve >>> X, y = make_classification(n_samples=100, n_features=10, random_state=42) >>> tree = DecisionTreeClassifier(max_depth=4, random_state=42) >>> train_size_abs, train_scores, test_scores = learning_curve( ... tree, X, y, train_sizes=[0.3, 0.6, 0.9] ... ) >>> for train_size, cv_train_scores, cv_test_scores in zip( ... train_size_abs, train_scores, test_scores ... ): ... print(f"{train_size} samples were used to train the model") ... print(f"The average train accuracy is {cv_train_scores.mean():.2f}") ... print(f"The average test accuracy is {cv_test_scores.mean():.2f}") 24 samples were used to train the model The average train accuracy is 1.00 The average test accuracy is 0.85 48 samples were used to train the model The average train accuracy is 1.00 The average test accuracy is 0.90 72 samples were used to train the model The average train accuracy is 1.00 The average test accuracy is 0.93

Gallery examples#

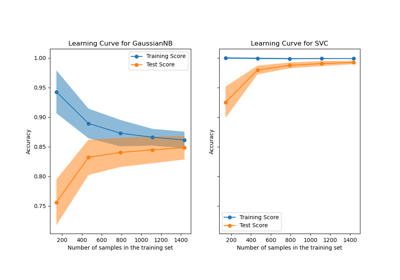

Plotting Learning Curves and Checking Models’ Scalability