hinge_loss#

- sklearn.metrics.hinge_loss(y_true, pred_decision, *, labels=None, sample_weight=None)[source]#

Average hinge loss (non-regularized).

In binary class case, assuming labels in

y_trueare encoded with +1 and -1, when a prediction mistake is made,margin = y_true * pred_decisionis always negative (since the signs are opposite), implying1 - marginis always greater than 1. The cumulated hinge loss is therefore an upper bound of the number of mistakes made by the classifier.In multiclass case, the function expects that either all the labels are present in

y_trueor an optionallabelsargument is provided which contains all the labels. The multiclass margin is calculated according to Crammer-Singer’s method. As in the binary case, the cumulated hinge loss is an upper bound of the number of mistakes made by the classifier.Read more in the User Guide.

- Parameters:

- y_truearray-like of shape (n_samples,)

True target. For binary data, it should only contain two unique values, with the positive label being greater than the negative label. For multiclass data, all labels should be present, or provided via

labels.- pred_decisionarray-like of shape (n_samples,) or (n_samples, n_classes)

Predicted decisions, as output by decision_function (floats).

- labelsarray-like, default=None

Contains all the labels for the problem. Used in multiclass hinge loss.

- sample_weightarray-like of shape (n_samples,), default=None

Sample weights.

- Returns:

- lossfloat

Average hinge loss.

References

Examples

>>> from sklearn import svm >>> from sklearn.metrics import hinge_loss >>> X = [[0], [1]] >>> y = [-1, 1] >>> est = svm.LinearSVC(random_state=0) >>> est.fit(X, y) LinearSVC(random_state=0) >>> pred_decision = est.decision_function([[-2], [3], [0.5]]) >>> pred_decision array([-2.18, 2.36, 0.09]) >>> hinge_loss([-1, 1, 1], pred_decision) 0.30

In the multiclass case:

>>> import numpy as np >>> X = np.array([[0], [1], [2], [3]]) >>> Y = np.array([0, 1, 2, 3]) >>> labels = np.array([0, 1, 2, 3]) >>> est = svm.LinearSVC() >>> est.fit(X, Y) LinearSVC() >>> pred_decision = est.decision_function([[-1], [2], [3]]) >>> y_true = [0, 2, 3] >>> hinge_loss(y_true, pred_decision, labels=labels) 0.56

Gallery examples#

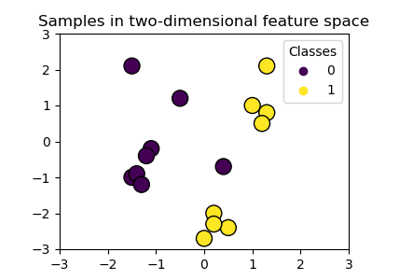

Plot classification boundaries with different SVM Kernels