AdaBoostRegressor#

- class sklearn.ensemble.AdaBoostRegressor(estimator=None, *, n_estimators=50, learning_rate=1.0, loss='linear', random_state=None)[source]#

An AdaBoost regressor.

An AdaBoost [1] regressor is a meta-estimator that begins by fitting a regressor on the original dataset and then fits additional copies of the regressor on the same dataset but where the weights of instances are adjusted according to the error of the current prediction. As such, subsequent regressors focus more on difficult cases.

This class implements the algorithm known as AdaBoost.R2 [2].

Read more in the User Guide.

Added in version 0.14.

- Parameters:

- estimatorobject, default=None

The base estimator from which the boosted ensemble is built. If

None, then the base estimator isDecisionTreeRegressorinitialized withmax_depth=3.Added in version 1.2:

base_estimatorwas renamed toestimator.- n_estimatorsint, default=50

The maximum number of estimators at which boosting is terminated. In case of perfect fit, the learning procedure is stopped early. Values must be in the range

[1, inf).- learning_ratefloat, default=1.0

Weight applied to each regressor at each boosting iteration. A higher learning rate increases the contribution of each regressor. There is a trade-off between the

learning_rateandn_estimatorsparameters. Values must be in the range(0.0, inf).- loss{‘linear’, ‘square’, ‘exponential’}, default=’linear’

The loss function to use when updating the weights after each boosting iteration.

- random_stateint, RandomState instance or None, default=None

Controls the random seed given at each

estimatorat each boosting iteration. Thus, it is only used whenestimatorexposes arandom_state. In addition, it controls the bootstrap of the weights used to train theestimatorat each boosting iteration. Pass an int for reproducible output across multiple function calls. See Glossary.

- Attributes:

- estimator_estimator

The base estimator from which the ensemble is grown.

Added in version 1.2:

base_estimator_was renamed toestimator_.- estimators_list of regressors

The collection of fitted sub-estimators.

- estimator_weights_ndarray of floats

Weights for each estimator in the boosted ensemble.

- estimator_errors_ndarray of floats

Regression error for each estimator in the boosted ensemble.

feature_importances_ndarray of shape (n_features,)The impurity-based feature importances.

- n_features_in_int

Number of features seen during fit.

Added in version 0.24.

- feature_names_in_ndarray of shape (

n_features_in_,) Names of features seen during fit. Defined only when

Xhas feature names that are all strings.Added in version 1.0.

See also

AdaBoostClassifierAn AdaBoost classifier.

GradientBoostingRegressorGradient Boosting Classification Tree.

sklearn.tree.DecisionTreeRegressorA decision tree regressor.

References

[1]Y. Freund, R. Schapire, “A Decision-Theoretic Generalization of on-Line Learning and an Application to Boosting”, 1995.

[2]Drucker, “Improving Regressors using Boosting Techniques”, 1997.

Examples

>>> from sklearn.ensemble import AdaBoostRegressor >>> from sklearn.datasets import make_regression >>> X, y = make_regression(n_features=4, n_informative=2, ... random_state=0, shuffle=False) >>> regr = AdaBoostRegressor(random_state=0, n_estimators=100) >>> regr.fit(X, y) AdaBoostRegressor(n_estimators=100, random_state=0) >>> regr.predict([[0, 0, 0, 0]]) array([4.7972]) >>> regr.score(X, y) 0.9771

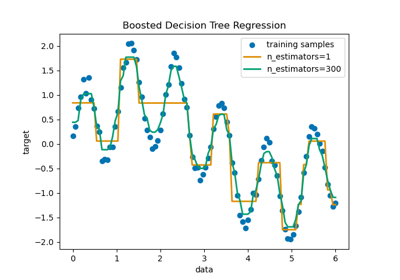

For a detailed example of utilizing

AdaBoostRegressorto fit a sequence of decision trees as weak learners, please refer to Decision Tree Regression with AdaBoost.- fit(X, y, sample_weight=None)[source]#

Build a boosted classifier/regressor from the training set (X, y).

- Parameters:

- X{array-like, sparse matrix} of shape (n_samples, n_features)

The training input samples. Sparse matrix can be CSC, CSR, COO, DOK, or LIL. COO, DOK, and LIL are converted to CSR.

- yarray-like of shape (n_samples,)

The target values.

- sample_weightarray-like of shape (n_samples,), default=None

Sample weights. If None, the sample weights are initialized to 1 / n_samples.

- Returns:

- selfobject

Fitted estimator.

- get_metadata_routing()[source]#

Raise

NotImplementedError.This estimator does not support metadata routing yet.

- get_params(deep=True)[source]#

Get parameters for this estimator.

- Parameters:

- deepbool, default=True

If True, will return the parameters for this estimator and contained subobjects that are estimators.

- Returns:

- paramsdict

Parameter names mapped to their values.

- predict(X)[source]#

Predict regression value for X.

The predicted regression value of an input sample is computed as the weighted median prediction of the regressors in the ensemble.

- Parameters:

- X{array-like, sparse matrix} of shape (n_samples, n_features)

The training input samples. Sparse matrix can be CSC, CSR, COO, DOK, or LIL. COO, DOK, and LIL are converted to CSR.

- Returns:

- yndarray of shape (n_samples,)

The predicted regression values.

- score(X, y, sample_weight=None)[source]#

Return coefficient of determination on test data.

The coefficient of determination, \(R^2\), is defined as \((1 - \frac{u}{v})\), where \(u\) is the residual sum of squares

((y_true - y_pred)** 2).sum()and \(v\) is the total sum of squares((y_true - y_true.mean()) ** 2).sum(). The best possible score is 1.0 and it can be negative (because the model can be arbitrarily worse). A constant model that always predicts the expected value ofy, disregarding the input features, would get a \(R^2\) score of 0.0.- Parameters:

- Xarray-like of shape (n_samples, n_features)

Test samples. For some estimators this may be a precomputed kernel matrix or a list of generic objects instead with shape

(n_samples, n_samples_fitted), wheren_samples_fittedis the number of samples used in the fitting for the estimator.- yarray-like of shape (n_samples,) or (n_samples, n_outputs)

True values for

X.- sample_weightarray-like of shape (n_samples,), default=None

Sample weights.

- Returns:

- scorefloat

\(R^2\) of

self.predict(X)w.r.t.y.

Notes

The \(R^2\) score used when calling

scoreon a regressor usesmultioutput='uniform_average'from version 0.23 to keep consistent with default value ofr2_score. This influences thescoremethod of all the multioutput regressors (except forMultiOutputRegressor).

- set_fit_request(*, sample_weight: bool | None | str = '$UNCHANGED$') AdaBoostRegressor[source]#

Configure whether metadata should be requested to be passed to the

fitmethod.Note that this method is only relevant when this estimator is used as a sub-estimator within a meta-estimator and metadata routing is enabled with

enable_metadata_routing=True(seesklearn.set_config). Please check the User Guide on how the routing mechanism works.The options for each parameter are:

True: metadata is requested, and passed tofitif provided. The request is ignored if metadata is not provided.False: metadata is not requested and the meta-estimator will not pass it tofit.None: metadata is not requested, and the meta-estimator will raise an error if the user provides it.str: metadata should be passed to the meta-estimator with this given alias instead of the original name.

The default (

sklearn.utils.metadata_routing.UNCHANGED) retains the existing request. This allows you to change the request for some parameters and not others.Added in version 1.3.

- Parameters:

- sample_weightstr, True, False, or None, default=sklearn.utils.metadata_routing.UNCHANGED

Metadata routing for

sample_weightparameter infit.

- Returns:

- selfobject

The updated object.

- set_params(**params)[source]#

Set the parameters of this estimator.

The method works on simple estimators as well as on nested objects (such as

Pipeline). The latter have parameters of the form<component>__<parameter>so that it’s possible to update each component of a nested object.- Parameters:

- **paramsdict

Estimator parameters.

- Returns:

- selfestimator instance

Estimator instance.

- set_score_request(*, sample_weight: bool | None | str = '$UNCHANGED$') AdaBoostRegressor[source]#

Configure whether metadata should be requested to be passed to the

scoremethod.Note that this method is only relevant when this estimator is used as a sub-estimator within a meta-estimator and metadata routing is enabled with

enable_metadata_routing=True(seesklearn.set_config). Please check the User Guide on how the routing mechanism works.The options for each parameter are:

True: metadata is requested, and passed toscoreif provided. The request is ignored if metadata is not provided.False: metadata is not requested and the meta-estimator will not pass it toscore.None: metadata is not requested, and the meta-estimator will raise an error if the user provides it.str: metadata should be passed to the meta-estimator with this given alias instead of the original name.

The default (

sklearn.utils.metadata_routing.UNCHANGED) retains the existing request. This allows you to change the request for some parameters and not others.Added in version 1.3.

- Parameters:

- sample_weightstr, True, False, or None, default=sklearn.utils.metadata_routing.UNCHANGED

Metadata routing for

sample_weightparameter inscore.

- Returns:

- selfobject

The updated object.

- staged_predict(X)[source]#

Return staged predictions for X.

The predicted regression value of an input sample is computed as the weighted median prediction of the regressors in the ensemble.

This generator method yields the ensemble prediction after each iteration of boosting and therefore allows monitoring, such as to determine the prediction on a test set after each boost.

- Parameters:

- X{array-like, sparse matrix} of shape (n_samples, n_features)

The training input samples.

- Yields:

- ygenerator of ndarray of shape (n_samples,)

The predicted regression values.

- staged_score(X, y, sample_weight=None)[source]#

Return staged scores for X, y.

This generator method yields the ensemble score after each iteration of boosting and therefore allows monitoring, such as to determine the score on a test set after each boost.

- Parameters:

- X{array-like, sparse matrix} of shape (n_samples, n_features)

The training input samples. Sparse matrix can be CSC, CSR, COO, DOK, or LIL. COO, DOK, and LIL are converted to CSR.

- yarray-like of shape (n_samples,)

Labels for X.

- sample_weightarray-like of shape (n_samples,), default=None

Sample weights.

- Yields:

- zfloat