class_likelihood_ratios#

- sklearn.metrics.class_likelihood_ratios(y_true, y_pred, *, labels=None, sample_weight=None, replace_undefined_by=nan)[source]#

Compute binary classification positive and negative likelihood ratios.

The positive likelihood ratio is

LR+ = sensitivity / (1 - specificity)where the sensitivity or recall is the ratiotp / (tp + fn)and the specificity istn / (tn + fp). The negative likelihood ratio isLR- = (1 - sensitivity) / specificity. Heretpis the number of true positives,fpthe number of false positives,tnis the number of true negatives andfnthe number of false negatives. Both class likelihood ratios can be used to obtain post-test probabilities given a pre-test probability.LR+ranges from 1.0 to infinity. ALR+of 1.0 indicates that the probability of predicting the positive class is the same for samples belonging to either class; therefore, the test is useless. The greaterLR+is, the more a positive prediction is likely to be a true positive when compared with the pre-test probability. A value ofLR+lower than 1.0 is invalid as it would indicate that the odds of a sample being a true positive decrease with respect to the pre-test odds.LR-ranges from 0.0 to 1.0. The closer it is to 0.0, the lower the probability of a given sample to be a false negative. ALR-of 1.0 means the test is useless because the odds of having the condition did not change after the test. A value ofLR-greater than 1.0 invalidates the classifier as it indicates an increase in the odds of a sample belonging to the positive class after being classified as negative. This is the case when the classifier systematically predicts the opposite of the true label.A typical application in medicine is to identify the positive/negative class to the presence/absence of a disease, respectively; the classifier being a diagnostic test; the pre-test probability of an individual having the disease can be the prevalence of such disease (proportion of a particular population found to be affected by a medical condition); and the post-test probabilities would be the probability that the condition is truly present given a positive test result.

Read more in the User Guide.

- Parameters:

- y_true1d array-like, or label indicator array / sparse matrix

Ground truth (correct) target values. Sparse matrix is only supported when targets are of multilabel type.

- y_pred1d array-like, or label indicator array / sparse matrix

Estimated targets as returned by a classifier. Sparse matrix is only supported when targets are of multilabel type.

- labelsarray-like, default=None

List of labels to index the matrix. This may be used to select the positive and negative classes with the ordering

labels=[negative_class, positive_class]. IfNoneis given, those that appear at least once iny_trueory_predare used in sorted order.- sample_weightarray-like of shape (n_samples,), default=None

Sample weights.

- replace_undefined_bynp.nan, 1.0, or dict, default=np.nan

Sets the return values for LR+ and LR- when there is a division by zero. Can take the following values:

np.nanto returnnp.nanfor bothLR+andLR-1.0to return the worst possible scores:{"LR+": 1.0, "LR-": 1.0}a dict in the format

{"LR+": value_1, "LR-": value_2}where the values can be non-negative floats,np.infornp.nanin the range of the likelihood ratios. For example,{"LR+": 1.0, "LR-": 1.0}can be used for returning the worst scores, indicating a useless model, and{"LR+": np.inf, "LR-": 0.0}can be used for returning the best scores, indicating a useful model.

If a division by zero occurs, only the affected metric is replaced with the set value; the other metric is calculated as usual.

Added in version 1.7.

- Returns:

- (positive_likelihood_ratio, negative_likelihood_ratio)tuple of float

A tuple of two floats, the first containing the positive likelihood ratio (LR+) and the second the negative likelihood ratio (LR-).

- Warns:

- Raises

UndefinedMetricWarningwheny_trueand y_predlead to the following conditions:The number of false positives is 0: positive likelihood ratio is undefined.

The number of true negatives is 0: negative likelihood ratio is undefined.

The sum of true positives and false negatives is 0 (no samples of the positive class are present in

y_true): both likelihood ratios are undefined.

For the first two cases, an undefined metric can be defined by setting the

replace_undefined_byparam.

- Raises

References

Examples

>>> import numpy as np >>> from sklearn.metrics import class_likelihood_ratios >>> class_likelihood_ratios([0, 1, 0, 1, 0], [1, 1, 0, 0, 0]) (1.5, 0.75) >>> y_true = np.array(["non-cat", "cat", "non-cat", "cat", "non-cat"]) >>> y_pred = np.array(["cat", "cat", "non-cat", "non-cat", "non-cat"]) >>> class_likelihood_ratios(y_true, y_pred) (1.33, 0.66) >>> y_true = np.array(["non-zebra", "zebra", "non-zebra", "zebra", "non-zebra"]) >>> y_pred = np.array(["zebra", "zebra", "non-zebra", "non-zebra", "non-zebra"]) >>> class_likelihood_ratios(y_true, y_pred) (1.5, 0.75)

To avoid ambiguities, use the notation

labels=[negative_class, positive_class]>>> y_true = np.array(["non-cat", "cat", "non-cat", "cat", "non-cat"]) >>> y_pred = np.array(["cat", "cat", "non-cat", "non-cat", "non-cat"]) >>> class_likelihood_ratios(y_true, y_pred, labels=["non-cat", "cat"]) (1.5, 0.75)

Gallery examples#

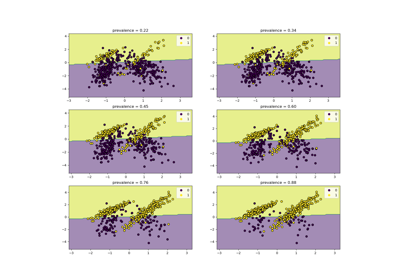

Class Likelihood Ratios to measure classification performance