KFold#

- class sklearn.model_selection.KFold(n_splits=5, *, shuffle=False, random_state=None)[source]#

K-Fold cross-validator.

Provides train/test indices to split data in train/test sets. Split dataset into k consecutive folds (without shuffling by default).

Each fold is then used once as a validation while the k - 1 remaining folds form the training set.

Read more in the User Guide.

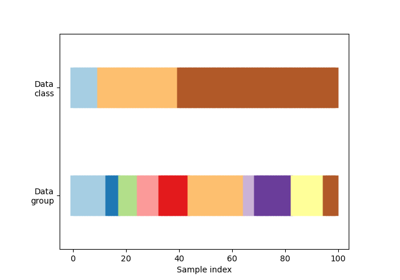

For visualisation of cross-validation behaviour and comparison between common scikit-learn split methods refer to Visualizing cross-validation behavior in scikit-learn

- Parameters:

- n_splitsint, default=5

Number of folds. Must be at least 2.

Changed in version 0.22:

n_splitsdefault value changed from 3 to 5.- shufflebool, default=False

Whether to shuffle the data before splitting into batches. Note that the samples within each split will not be shuffled.

- random_stateint, RandomState instance or None, default=None

When

shuffleis True,random_stateaffects the ordering of the indices, which controls the randomness of each fold. Otherwise, this parameter has no effect. Pass an int for reproducible output across multiple function calls. See Glossary.

See also

StratifiedKFoldTakes class information into account to avoid building folds with imbalanced class distributions (for binary or multiclass classification tasks).

GroupKFoldK-fold iterator variant with non-overlapping groups.

RepeatedKFoldRepeats K-Fold n times.

Notes

The first

n_samples % n_splitsfolds have sizen_samples // n_splits + 1, other folds have sizen_samples // n_splits, wheren_samplesis the number of samples.Randomized CV splitters may return different results for each call of split. You can make the results identical by setting

random_stateto an integer.Examples

>>> import numpy as np >>> from sklearn.model_selection import KFold >>> X = np.array([[1, 2], [3, 4], [1, 2], [3, 4]]) >>> y = np.array([1, 2, 3, 4]) >>> kf = KFold(n_splits=2) >>> kf.get_n_splits() 2 >>> print(kf) KFold(n_splits=2, random_state=None, shuffle=False) >>> for i, (train_index, test_index) in enumerate(kf.split(X)): ... print(f"Fold {i}:") ... print(f" Train: index={train_index}") ... print(f" Test: index={test_index}") Fold 0: Train: index=[2 3] Test: index=[0 1] Fold 1: Train: index=[0 1] Test: index=[2 3]

- get_metadata_routing()[source]#

Get metadata routing of this object.

Please check User Guide on how the routing mechanism works.

- Returns:

- routingMetadataRequest

A

MetadataRequestencapsulating routing information.

- get_n_splits(X=None, y=None, groups=None)[source]#

Returns the number of splitting iterations as set with the

n_splitsparam when instantiating the cross-validator.- Parameters:

- Xarray-like of shape (n_samples, n_features), default=None

Always ignored, exists for API compatibility.

- yarray-like of shape (n_samples,), default=None

Always ignored, exists for API compatibility.

- groupsarray-like of shape (n_samples,), default=None

Always ignored, exists for API compatibility.

- Returns:

- n_splitsint

Returns the number of splitting iterations in the cross-validator.

- split(X, y=None, groups=None)[source]#

Generate indices to split data into training and test set.

- Parameters:

- Xarray-like of shape (n_samples, n_features)

Training data, where

n_samplesis the number of samples andn_featuresis the number of features.- yarray-like of shape (n_samples,), default=None

The target variable for supervised learning problems.

- groupsarray-like of shape (n_samples,), default=None

Always ignored, exists for API compatibility.

- Yields:

- trainndarray

The training set indices for that split.

- testndarray

The testing set indices for that split.

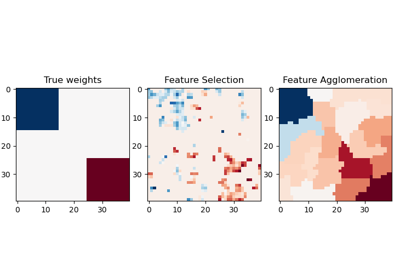

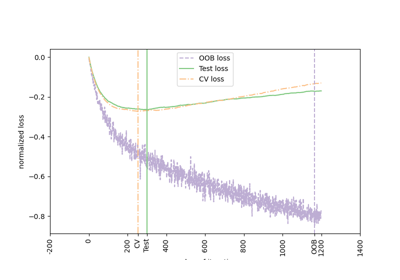

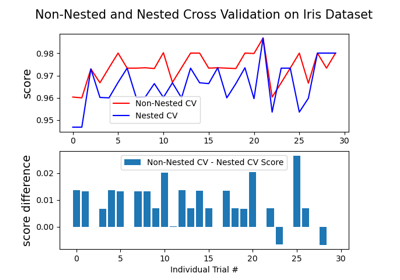

Gallery examples#

Comparing Random Forests and Histogram Gradient Boosting models

Visualizing cross-validation behavior in scikit-learn