- scikit-learn Tutorials

- An introduction to machine learning with scikit-learn

- A tutorial on statistical-learning for scientific data processing

- Statistical learning: the setting and the estimator object in scikit-learn

- Supervised learning: predicting an output variable from high-dimensional observations

- Model selection: choosing estimators and their parameters

- Unsupervised learning: seeking representations of the data

- Putting it all together

- Finding help

- Working With Text Data

- Tutorial setup

- Loading the 20 newgroups dataset

- Extracting features from text files

- Training a classifier

- Building a pipeline

- Evaluation of the performance on the test set

- Parameter tuning using grid search

- Exercise 1: Language identification

- Exercise 2: Sentiment Analysis on movie reviews

- Exercise 3: CLI text classification utility

- Where to from here

- 1. Supervised learning

- 1.1. Generalized Linear Models

- 1.1.1. Ordinary Least Squares

- 1.1.2. Ridge Regression

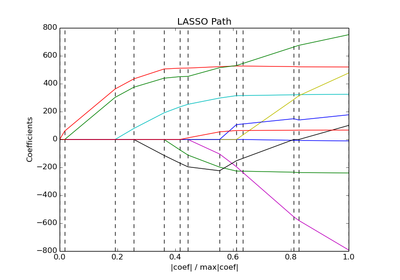

- 1.1.3. Lasso

- 1.1.4. Elastic Net

- 1.1.5. Multi-task Lasso

- 1.1.6. Least Angle Regression

- 1.1.7. LARS Lasso

- 1.1.8. Orthogonal Matching Pursuit (OMP)

- 1.1.9. Bayesian Regression

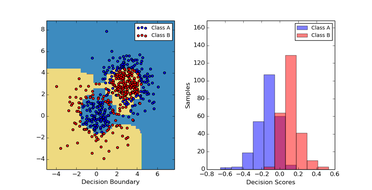

- 1.1.10. Logistic regression

- 1.1.11. Stochastic Gradient Descent - SGD

- 1.1.12. Perceptron

- 1.1.13. Passive Aggressive Algorithms

- 1.1.14. Robustness to outliers: RANSAC

- 1.1.15. Polynomial regression: extending linear models with basis functions

- 1.2. Support Vector Machines

- 1.3. Stochastic Gradient Descent

- 1.4. Nearest Neighbors

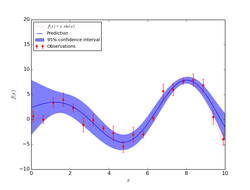

- 1.5. Gaussian Processes

- 1.6. Cross decomposition

- 1.7. Naive Bayes

- 1.8. Decision Trees

- 1.9. Ensemble methods

- 1.9.1. Bagging meta-estimator

- 1.9.2. Forests of randomized trees

- 1.9.3. AdaBoost

- 1.9.4. Gradient Tree Boosting

- 1.10. Multiclass and multilabel algorithms

- 1.11. Feature selection

- 1.12. Semi-Supervised

- 1.13. Linear and quadratic discriminant analysis

- 1.14. Isotonic regression

- 1.1. Generalized Linear Models

- 2. Unsupervised learning

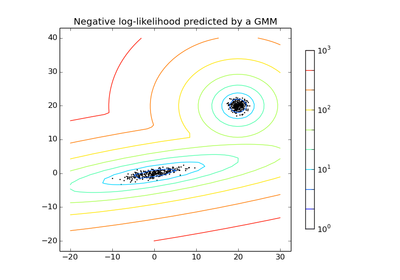

- 2.1. Gaussian mixture models

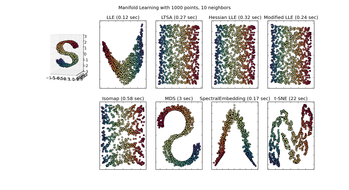

- 2.2. Manifold learning

- 2.2.1. Introduction

- 2.2.2. Isomap

- 2.2.3. Locally Linear Embedding

- 2.2.4. Modified Locally Linear Embedding

- 2.2.5. Hessian Eigenmapping

- 2.2.6. Spectral Embedding

- 2.2.7. Local Tangent Space Alignment

- 2.2.8. Multi-dimensional Scaling (MDS)

- 2.2.9. t-distributed Stochastic Neighbor Embedding (t-SNE)

- 2.2.10. Tips on practical use

- 2.3. Clustering

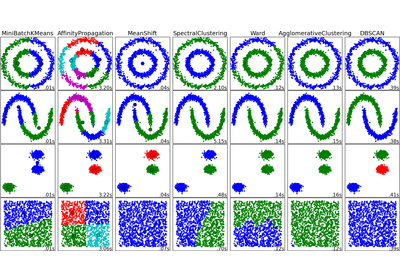

- 2.3.1. Overview of clustering methods

- 2.3.2. K-means

- 2.3.3. Affinity Propagation

- 2.3.4. Mean Shift

- 2.3.5. Spectral clustering

- 2.3.6. Hierarchical clustering

- 2.3.7. DBSCAN

- 2.3.8. Clustering performance evaluation

- 2.4. Biclustering

- 2.5. Decomposing signals in components (matrix factorization problems)

- 2.6. Covariance estimation

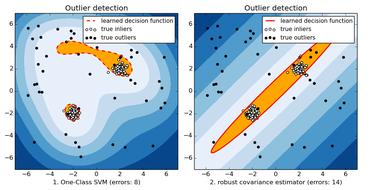

- 2.7. Novelty and Outlier Detection

- 2.8. Density Estimation

- 2.9. Neural network models (unsupervised)

- 3. Model selection and evaluation

- 3.1. Cross-validation: evaluating estimator performance

- 3.2. Grid Search: Searching for estimator parameters

- 3.2.1. Exhaustive Grid Search

- 3.2.2. Randomized Parameter Optimization

- 3.2.3. Alternatives to brute force parameter search

- 3.2.3.1. Model specific cross-validation

- 3.2.3.2. Information Criterion

- 3.2.3.3. Out of Bag Estimates

- 3.2.3.3.1. sklearn.ensemble.RandomForestClassifier

- 3.2.3.3.2. sklearn.ensemble.RandomForestRegressor

- 3.2.3.3.3. sklearn.ensemble.ExtraTreesClassifier

- 3.2.3.3.4. sklearn.ensemble.ExtraTreesRegressor

- 3.2.3.3.5. sklearn.ensemble.GradientBoostingClassifier

- 3.2.3.3.6. sklearn.ensemble.GradientBoostingRegressor

- 3.3. Pipeline: chaining estimators

- 3.4. FeatureUnion: Combining feature extractors

- 3.5. Model evaluation: quantifying the quality of predictions

- 3.5.1. The scoring parameter: defining model evaluation rules

- 3.5.2. Function for prediction-error metrics

- 3.5.2.1. Classification metrics

- 3.5.2.1.1. Accuracy score

- 3.5.2.1.2. Confusion matrix

- 3.5.2.1.3. Classification report

- 3.5.2.1.4. Hamming loss

- 3.5.2.1.5. Jaccard similarity coefficient score

- 3.5.2.1.6. Precision, recall and F-measures

- 3.5.2.1.7. Hinge loss

- 3.5.2.1.8. Log loss

- 3.5.2.1.9. Matthews correlation coefficient

- 3.5.2.1.10. Receiver operating characteristic (ROC)

- 3.5.2.1.11. Zero one loss

- 3.5.2.2. Regression metrics

- 3.5.2.1. Classification metrics

- 3.5.3. Clustering metrics

- 3.5.4. Biclustering metrics

- 3.5.5. Dummy estimators

- 3.6. Model persistence

- 3.7. Validation curves: plotting scores to evaluate models

- 4. Dataset transformations

- 4.1. Feature extraction

- 4.1.1. Loading features from dicts

- 4.1.2. Feature hashing

- 4.1.3. Text feature extraction

- 4.1.3.1. The Bag of Words representation

- 4.1.3.2. Sparsity

- 4.1.3.3. Common Vectorizer usage

- 4.1.3.4. Tf–idf term weighting

- 4.1.3.5. Decoding text files

- 4.1.3.6. Applications and examples

- 4.1.3.7. Limitations of the Bag of Words representation

- 4.1.3.8. Vectorizing a large text corpus with the hashing trick

- 4.1.3.9. Performing out-of-core scaling with HashingVectorizer

- 4.1.3.10. Customizing the vectorizer classes

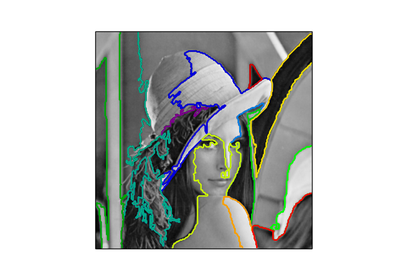

- 4.1.4. Image feature extraction

- 4.2. Preprocessing data

- 4.3. Kernel Approximation

- 4.4. Random Projection

- 4.5. Pairwise metrics, Affinities and Kernels

- 4.1. Feature extraction

- 5. Dataset loading utilities

- 5.1. General dataset API

- 5.2. Toy datasets

- 5.3. Sample images

- 5.4. Sample generators

- 5.5. Datasets in svmlight / libsvm format

- 5.6. The Olivetti faces dataset

- 5.7. The 20 newsgroups text dataset

- 5.8. Downloading datasets from the mldata.org repository

- 5.9. The Labeled Faces in the Wild face recognition dataset

- 5.10. Forest covertypes

- 6. Strategies to scale computationally: bigger data

- 7. Computational Performance

- Examples

- General examples

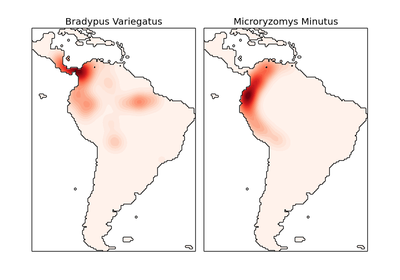

- Examples based on real world datasets

- Biclustering

- Clustering

- Covariance estimation

- Cross decomposition

- Dataset examples

- Decomposition

- Ensemble methods

- Tutorial exercises

- Gaussian Process for Machine Learning

- Generalized Linear Models

- Manifold learning

- Gaussian Mixture Models

- Nearest Neighbors

- Semi Supervised Classification

- Support Vector Machines

- Decision Trees

- General examples

- Frequently Asked Questions

- What is the project name (a lot of people get it wrong)?

- How do you pronounce the project name?

- Why scikit?

- How can I contribute to scikit-learn?

- Can I add this new algorithm that I (or someone else) just published?

- Can I add this classical algorithm from the 80s?

- Why did you remove HMMs from scikit-learn?

- Will you add graphical models or sequence prediction to scikit-learn?

- Will you add GPU support?

- Do you support PyPy?

- Support

- 0.15.2

- 0.15.1

- 0.15

- 0.14

- 0.13.1

- 0.13

- 0.12.1

- 0.12

- 0.11

- 0.10

- 0.9

- 0.8

- 0.7

- 0.6

- 0.5

- 0.4

- Earlier versions

- External Resources, Videos and Talks

- About us

- Documentation of scikit-learn 0.15

- 5. Dataset loading utilities

- 5.1. General dataset API

- 5.2. Toy datasets

- 5.3. Sample images

- 5.4. Sample generators

- 5.5. Datasets in svmlight / libsvm format

- 5.6. The Olivetti faces dataset

- 5.7. The 20 newsgroups text dataset

- 5.8. Downloading datasets from the mldata.org repository

- 5.9. The Labeled Faces in the Wild face recognition dataset

- 5.10. Forest covertypes

- Forest covertypes

- The Labeled Faces in the Wild face recognition dataset

- Downloading datasets from the mldata.org repository

- The Olivetti faces dataset

- The 20 newsgroups text dataset

- Reference

- sklearn.base: Base classes and utility functions

- sklearn.cluster: Clustering

- Classes

- Functions

- sklearn.cluster.bicluster: Biclustering

- sklearn.covariance: Covariance Estimators

- sklearn.covariance.EmpiricalCovariance

- sklearn.covariance.EllipticEnvelope

- sklearn.covariance.GraphLasso

- sklearn.covariance.GraphLassoCV

- sklearn.covariance.LedoitWolf

- sklearn.covariance.MinCovDet

- sklearn.covariance.OAS

- sklearn.covariance.ShrunkCovariance

- sklearn.covariance.empirical_covariance

- sklearn.covariance.ledoit_wolf

- sklearn.covariance.shrunk_covariance

- sklearn.covariance.oas

- sklearn.covariance.graph_lasso

- sklearn.cross_validation: Cross Validation

- sklearn.cross_validation.KFold

- sklearn.cross_validation.LeaveOneLabelOut

- sklearn.cross_validation.LeaveOneOut

- sklearn.cross_validation.LeavePLabelOut

- sklearn.cross_validation.LeavePOut

- sklearn.cross_validation.StratifiedKFold

- sklearn.cross_validation.ShuffleSplit

- sklearn.cross_validation.StratifiedShuffleSplit

- sklearn.cross_validation.train_test_split

- sklearn.cross_validation.cross_val_score

- sklearn.cross_validation.permutation_test_score

- sklearn.cross_validation.check_cv

- sklearn.datasets: Datasets

- Loaders

- sklearn.datasets.fetch_20newsgroups

- sklearn.datasets.fetch_20newsgroups_vectorized

- sklearn.datasets.load_boston

- sklearn.datasets.load_diabetes

- sklearn.datasets.load_digits

- sklearn.datasets.load_files

- sklearn.datasets.load_iris

- sklearn.datasets.load_lfw_pairs

- sklearn.datasets.fetch_lfw_pairs

- sklearn.datasets.load_lfw_people

- sklearn.datasets.fetch_lfw_people

- sklearn.datasets.load_linnerud

- sklearn.datasets.fetch_mldata

- sklearn.datasets.fetch_olivetti_faces

- sklearn.datasets.fetch_california_housing

- sklearn.datasets.fetch_covtype

- sklearn.datasets.load_mlcomp

- sklearn.datasets.load_sample_image

- sklearn.datasets.load_sample_images

- sklearn.datasets.load_svmlight_file

- sklearn.datasets.dump_svmlight_file

- Samples generator

- sklearn.datasets.make_blobs

- sklearn.datasets.make_classification

- sklearn.datasets.make_circles

- sklearn.datasets.make_friedman1

- sklearn.datasets.make_friedman2

- sklearn.datasets.make_friedman3

- sklearn.datasets.make_gaussian_quantiles

- sklearn.datasets.make_hastie_10_2

- sklearn.datasets.make_low_rank_matrix

- sklearn.datasets.make_moons

- sklearn.datasets.make_multilabel_classification

- sklearn.datasets.make_regression

- sklearn.datasets.make_s_curve

- sklearn.datasets.make_sparse_coded_signal

- sklearn.datasets.make_sparse_spd_matrix

- sklearn.datasets.make_sparse_uncorrelated

- sklearn.datasets.make_spd_matrix

- sklearn.datasets.make_swiss_roll

- sklearn.datasets.make_biclusters

- sklearn.datasets.make_checkerboard

- Loaders

- sklearn.decomposition: Matrix Decomposition

- sklearn.decomposition.PCA

- sklearn.decomposition.ProjectedGradientNMF

- sklearn.decomposition.RandomizedPCA

- sklearn.decomposition.KernelPCA

- sklearn.decomposition.FactorAnalysis

- sklearn.decomposition.FastICA

- sklearn.decomposition.TruncatedSVD

- sklearn.decomposition.NMF

- sklearn.decomposition.SparsePCA

- sklearn.decomposition.MiniBatchSparsePCA

- sklearn.decomposition.SparseCoder

- sklearn.decomposition.DictionaryLearning

- sklearn.decomposition.MiniBatchDictionaryLearning

- sklearn.decomposition.fastica

- sklearn.decomposition.dict_learning

- sklearn.decomposition.dict_learning_online

- sklearn.decomposition.sparse_encode

- sklearn.dummy: Dummy estimators

- sklearn.ensemble: Ensemble Methods

- sklearn.ensemble.AdaBoostClassifier

- sklearn.ensemble.AdaBoostRegressor

- sklearn.ensemble.BaggingClassifier

- sklearn.ensemble.BaggingRegressor

- 3.2.3.3.3. sklearn.ensemble.ExtraTreesClassifier

- 3.2.3.3.4. sklearn.ensemble.ExtraTreesRegressor

- 3.2.3.3.5. sklearn.ensemble.GradientBoostingClassifier

- 3.2.3.3.6. sklearn.ensemble.GradientBoostingRegressor

- 3.2.3.3.1. sklearn.ensemble.RandomForestClassifier

- sklearn.ensemble.RandomTreesEmbedding

- 3.2.3.3.2. sklearn.ensemble.RandomForestRegressor

- partial dependence

- sklearn.feature_extraction: Feature Extraction

- sklearn.feature_extraction.DictVectorizer

- sklearn.feature_extraction.FeatureHasher

- From images

- From text

- sklearn.feature_selection: Feature Selection

- sklearn.feature_selection.GenericUnivariateSelect

- sklearn.feature_selection.SelectPercentile

- sklearn.feature_selection.SelectKBest

- sklearn.feature_selection.SelectFpr

- sklearn.feature_selection.SelectFdr

- sklearn.feature_selection.SelectFwe

- sklearn.feature_selection.RFE

- sklearn.feature_selection.RFECV

- sklearn.feature_selection.VarianceThreshold

- sklearn.feature_selection.chi2

- sklearn.feature_selection.f_classif

- sklearn.feature_selection.f_regression

- sklearn.gaussian_process: Gaussian Processes

- sklearn.gaussian_process.GaussianProcess

- sklearn.gaussian_process.correlation_models.absolute_exponential

- sklearn.gaussian_process.correlation_models.squared_exponential

- sklearn.gaussian_process.correlation_models.generalized_exponential

- sklearn.gaussian_process.correlation_models.pure_nugget

- sklearn.gaussian_process.correlation_models.cubic

- sklearn.gaussian_process.correlation_models.linear

- sklearn.gaussian_process.regression_models.constant

- sklearn.gaussian_process.regression_models.linear

- sklearn.gaussian_process.regression_models.quadratic

- sklearn.grid_search: Grid Search

- sklearn.isotonic: Isotonic regression

- sklearn.kernel_approximation Kernel Approximation

- sklearn.lda: Linear Discriminant Analysis

- sklearn.learning_curve Learning curve evaluation

- sklearn.linear_model: Generalized Linear Models

- sklearn.linear_model.ARDRegression

- sklearn.linear_model.BayesianRidge

- sklearn.linear_model.ElasticNet

- 3.2.3.1.6. sklearn.linear_model.ElasticNetCV

- sklearn.linear_model.Lars

- 3.2.3.1.3. sklearn.linear_model.LarsCV

- sklearn.linear_model.Lasso

- 3.2.3.1.5. sklearn.linear_model.LassoCV

- sklearn.linear_model.LassoLars

- 3.2.3.1.4. sklearn.linear_model.LassoLarsCV

- 3.2.3.2.1. sklearn.linear_model.LassoLarsIC

- sklearn.linear_model.LinearRegression

- sklearn.linear_model.LogisticRegression

- sklearn.linear_model.MultiTaskLasso

- sklearn.linear_model.MultiTaskElasticNet

- sklearn.linear_model.MultiTaskLassoCV

- sklearn.linear_model.MultiTaskElasticNetCV

- sklearn.linear_model.OrthogonalMatchingPursuit

- sklearn.linear_model.OrthogonalMatchingPursuitCV

- sklearn.linear_model.PassiveAggressiveClassifier

- sklearn.linear_model.PassiveAggressiveRegressor

- sklearn.linear_model.Perceptron

- sklearn.linear_model.RandomizedLasso

- sklearn.linear_model.RandomizedLogisticRegression

- sklearn.linear_model.RANSACRegressor

- sklearn.linear_model.Ridge

- sklearn.linear_model.RidgeClassifier

- 3.2.3.1.2. sklearn.linear_model.RidgeClassifierCV

- 3.2.3.1.1. sklearn.linear_model.RidgeCV

- sklearn.linear_model.SGDClassifier

- sklearn.linear_model.SGDRegressor

- sklearn.linear_model.lars_path

- sklearn.linear_model.lasso_path

- sklearn.linear_model.lasso_stability_path

- sklearn.linear_model.orthogonal_mp

- sklearn.linear_model.orthogonal_mp_gram

- sklearn.manifold: Manifold Learning

- sklearn.metrics: Metrics

- Model Selection Interface

- Classification metrics

- sklearn.metrics.accuracy_score

- sklearn.metrics.auc

- sklearn.metrics.average_precision_score

- sklearn.metrics.classification_report

- sklearn.metrics.confusion_matrix

- sklearn.metrics.f1_score

- sklearn.metrics.fbeta_score

- sklearn.metrics.hamming_loss

- sklearn.metrics.hinge_loss

- sklearn.metrics.jaccard_similarity_score

- sklearn.metrics.log_loss

- sklearn.metrics.matthews_corrcoef

- sklearn.metrics.precision_recall_curve

- sklearn.metrics.precision_recall_fscore_support

- sklearn.metrics.precision_score

- sklearn.metrics.recall_score

- sklearn.metrics.roc_auc_score

- sklearn.metrics.roc_curve

- sklearn.metrics.zero_one_loss

- Regression metrics

- Clustering metrics

- sklearn.metrics.adjusted_mutual_info_score

- sklearn.metrics.adjusted_rand_score

- sklearn.metrics.completeness_score

- sklearn.metrics.homogeneity_completeness_v_measure

- sklearn.metrics.homogeneity_score

- sklearn.metrics.mutual_info_score

- sklearn.metrics.normalized_mutual_info_score

- sklearn.metrics.silhouette_score

- sklearn.metrics.silhouette_samples

- sklearn.metrics.v_measure_score

- Biclustering metrics

- Pairwise metrics

- sklearn.metrics.pairwise.additive_chi2_kernel

- sklearn.metrics.pairwise.chi2_kernel

- sklearn.metrics.pairwise.distance_metrics

- sklearn.metrics.pairwise.euclidean_distances

- sklearn.metrics.pairwise.kernel_metrics

- sklearn.metrics.pairwise.linear_kernel

- sklearn.metrics.pairwise.manhattan_distances

- sklearn.metrics.pairwise.pairwise_distances

- sklearn.metrics.pairwise.pairwise_kernels

- sklearn.metrics.pairwise.polynomial_kernel

- sklearn.metrics.pairwise.rbf_kernel

- sklearn.metrics.pairwise_distances

- sklearn.metrics.pairwise_distances_argmin

- sklearn.metrics.pairwise_distances_argmin_min

- sklearn.mixture: Gaussian Mixture Models

- sklearn.multiclass: Multiclass and multilabel classification

- Multiclass and multilabel classification strategies

- sklearn.multiclass.OneVsRestClassifier

- sklearn.multiclass.OneVsOneClassifier

- sklearn.multiclass.OutputCodeClassifier

- sklearn.multiclass.fit_ovr

- sklearn.multiclass.predict_ovr

- sklearn.multiclass.fit_ovo

- sklearn.multiclass.predict_ovo

- sklearn.multiclass.fit_ecoc

- sklearn.multiclass.predict_ecoc

- sklearn.naive_bayes: Naive Bayes

- sklearn.neighbors: Nearest Neighbors

- sklearn.neighbors.NearestNeighbors

- sklearn.neighbors.KNeighborsClassifier

- sklearn.neighbors.RadiusNeighborsClassifier

- sklearn.neighbors.KNeighborsRegressor

- sklearn.neighbors.RadiusNeighborsRegressor

- sklearn.neighbors.NearestCentroid

- sklearn.neighbors.BallTree

- sklearn.neighbors.KDTree

- sklearn.neighbors.DistanceMetric

- sklearn.neighbors.KernelDensity

- sklearn.neighbors.kneighbors_graph

- sklearn.neighbors.radius_neighbors_graph

- sklearn.neural_network: Neural network models

- sklearn.cross_decomposition: Cross decomposition

- sklearn.pipeline: Pipeline

- sklearn.preprocessing: Preprocessing and Normalization

- sklearn.preprocessing.Binarizer

- sklearn.preprocessing.Imputer

- sklearn.preprocessing.KernelCenterer

- sklearn.preprocessing.LabelBinarizer

- sklearn.preprocessing.LabelEncoder

- sklearn.preprocessing.MultiLabelBinarizer

- sklearn.preprocessing.MinMaxScaler

- sklearn.preprocessing.Normalizer

- sklearn.preprocessing.OneHotEncoder

- sklearn.preprocessing.StandardScaler

- sklearn.preprocessing.PolynomialFeatures

- sklearn.preprocessing.add_dummy_feature

- sklearn.preprocessing.binarize

- sklearn.preprocessing.label_binarize

- sklearn.preprocessing.normalize

- sklearn.preprocessing.scale

- sklearn.qda: Quadratic Discriminant Analysis

- sklearn.random_projection: Random projection

- sklearn.semi_supervised Semi-Supervised Learning

- sklearn.svm: Support Vector Machines

- sklearn.tree: Decision Trees

- sklearn.utils: Utilities

- Who is using scikit-learn?

- Contributing

- Developers’ Tips for Debugging

- Maintainer / core-developer information

- How to optimize for speed

- Utilities for Developers

- Installing scikit-learn

- An introduction to machine learning with scikit-learn

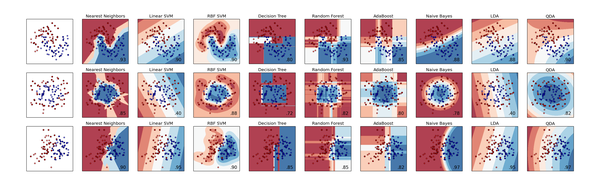

- Choosing the right estimator

Identifying to which set of categories a new observation belong to.

Applications: Spam detection, Image recognition. Algorithms:SVM, nearest neighbors, random forest, ...

Predicting a continuous value for a new example.

Applications: Drug response, Stock prices. Algorithms:SVR, ridge regression, Lasso, ...

Automatic grouping of similar objects into sets.

Applications: Customer segmentation, Grouping experiment outcomes Algorithms:k-Means, spectral clustering, mean-shift, ...

Reducing the number of random variables to consider.

Applications: Visualization, Increased efficiency Algorithms:

Comparing, validating and choosing parameters and models.

Goal: Improved accuracy via parameter tuning Modules:

Feature extraction and normalization.

Application: Transforming input data such as text for use with machine learning algorithms. Modules:preprocessing, feature extraction.

News

- On-going development: What's new (changelog)

- August 2014. scikit-learn 0.15.1 is available for download (Changelog).

- July 2014. scikit-learn 0.15.0 is available for download (Changelog).

- July 14-20th, 2014: international sprint. During this week-long sprint, we gathered 18 of the core contributors in Paris. We want to thank our sponsors: Paris-Saclay Center for Data Science & Digicosme and our hosts La Paillasse, Criteo, Inria, and tinyclues.

- August 2013. scikit-learn 0.14 is available for download (Changelog).

Community

- About us See authors # scikit-learn

- Questions? See stackoverflow # scikit-learn

- Mailing list: scikit-learn-general@lists.sourceforge.net

- IRC: #scikit-learn @ freenode