LogisticRegressionCV#

- class sklearn.linear_model.LogisticRegressionCV(*, Cs=10, l1_ratios='warn', fit_intercept=True, cv=None, dual=False, penalty='deprecated', scoring=None, solver='lbfgs', tol=0.0001, max_iter=100, class_weight=None, n_jobs=None, verbose=0, refit=True, intercept_scaling=1.0, random_state=None, use_legacy_attributes='warn')[source]#

Logistic Regression CV (aka logit, MaxEnt) classifier.

See glossary entry for cross-validation estimator.

This class implements regularized logistic regression with implicit cross validation for the penalty parameters

Candl1_ratio, seeLogisticRegression, using a set of available solvers.The solvers ‘lbfgs’, ‘newton-cg’, ‘newton-cholesky’ and ‘sag’ support only L2 regularization with primal formulation. The ‘liblinear’ solver supports both L1 and L2 regularization (but not both, i.e. elastic-net), with a dual formulation only for the L2 penalty. The Elastic-Net (combination of L1 and L2) regularization is only supported by the ‘saga’ solver.

For the grid of

Csvalues andl1_ratiosvalues, the best hyperparameter is selected by the cross-validatorStratifiedKFold, but it can be changed using the cv parameter. All solvers except ‘liblinear’ can warm-start the coefficients (see Glossary).Read more in the User Guide.

- Parameters:

- Csint or list of floats, default=10

Each of the values in Cs describes the inverse of regularization strength. If Cs is as an int, then a grid of Cs values are chosen in a logarithmic scale between 1e-4 and 1e4. Like in support vector machines, smaller values specify stronger regularization.

- l1_ratiosarray-like of shape (n_l1_ratios), default=None

Floats between 0 and 1 passed as Elastic-Net mixing parameter (scaling between L1 and L2 penalties). For

l1_ratio = 0the penalty is an L2 penalty. Forl1_ratio = 1it is an L1 penalty. For0 < l1_ratio < 1, the penalty is a combination of L1 and L2. All the values of the given array-like are tested by cross-validation and the one giving the best prediction score is used.Warning

Certain values of

l1_ratios, i.e. some penalties, may not work with some solvers. See the parametersolverbelow, to know the compatibility between the penalty and solver.Deprecated since version 1.8:

l1_ratios=Noneis deprecated in 1.8 and will raise an error in version 1.10. Default value will change fromNoneto(0.0,)in version 1.10.- fit_interceptbool, default=True

Specifies if a constant (a.k.a. bias or intercept) should be added to the decision function.

- cvint or cross-validation generator, default=None

The default cross-validation generator used is Stratified K-Folds. If an integer is provided, it specifies the number of folds,

n_folds, used. See the modulesklearn.model_selectionmodule for the list of possible cross-validation objects.Changed in version 0.22:

cvdefault value if None changed from 3-fold to 5-fold.- dualbool, default=False

Dual (constrained) or primal (regularized, see also this equation) formulation. Dual formulation is only implemented for l2 penalty with liblinear solver. Prefer dual=False when n_samples > n_features.

- penalty{‘l1’, ‘l2’, ‘elasticnet’}, default=’l2’

Specify the norm of the penalty:

'l2': add an L2 penalty term (used by default);'l1': add an L1 penalty term;'elasticnet': both L1 and L2 penalty terms are added.

Warning

Some penalties may not work with some solvers. See the parameter

solverbelow, to know the compatibility between the penalty and solver.Deprecated since version 1.8:

penaltywas deprecated in version 1.8 and will be removed in 1.10. Usel1_ratioinstead.l1_ratio=0forpenalty='l2',l1_ratio=1forpenalty='l1'andl1_ratioset to any float between 0 and 1 for'penalty='elasticnet'.- scoringstr or callable, default=None

The scoring method to use for cross-validation. Options:

str: see String name scorers for options.

callable: a scorer callable object (e.g., function) with signature

scorer(estimator, X, y). See Callable scorers for details.None: accuracy is used.

- solver{‘lbfgs’, ‘liblinear’, ‘newton-cg’, ‘newton-cholesky’, ‘sag’, ‘saga’}, default=’lbfgs’

Algorithm to use in the optimization problem. Default is ‘lbfgs’. To choose a solver, you might want to consider the following aspects:

‘lbfgs’ is a good default solver because it works reasonably well for a wide class of problems.

For multiclass problems (

n_classes >= 3), all solvers except ‘liblinear’ minimize the full multinomial loss, ‘liblinear’ will raise an error.‘newton-cholesky’ is a good choice for

n_samples>>n_features * n_classes, especially with one-hot encoded categorical features with rare categories. Be aware that the memory usage of this solver has a quadratic dependency onn_features * n_classesbecause it explicitly computes the full Hessian matrix.For small datasets, ‘liblinear’ is a good choice, whereas ‘sag’ and ‘saga’ are faster for large ones;

‘liblinear’ might be slower in

LogisticRegressionCVbecause it does not handle warm-starting.‘liblinear’ can only handle binary classification by default. To apply a one-versus-rest scheme for the multiclass setting one can wrap it with the

OneVsRestClassifier.

Warning

The choice of the algorithm depends on the penalty (

l1_ratio=0for L2-penalty,l1_ratio=1for L1-penalty and0 < l1_ratio < 1for Elastic-Net) chosen and on (multinomial) multiclass support:solver

l1_ratio

multinomial multiclass

‘lbfgs’

l1_ratio=0

yes

‘liblinear’

l1_ratio=1 or l1_ratio=0

no

‘newton-cg’

l1_ratio=0

yes

‘newton-cholesky’

l1_ratio=0

yes

‘sag’

l1_ratio=0

yes

‘saga’

0<=l1_ratio<=1

yes

Note

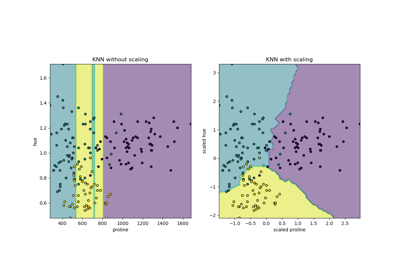

‘sag’ and ‘saga’ fast convergence is only guaranteed on features with approximately the same scale. You can preprocess the data with a scaler from

sklearn.preprocessing.Added in version 0.17: Stochastic Average Gradient (SAG) descent solver. Multinomial support in version 0.18.

Added in version 0.19: SAGA solver.

Added in version 1.2: newton-cholesky solver. Multinomial support in version 1.6.

- tolfloat, default=1e-4

Tolerance for stopping criteria.

- max_iterint, default=100

Maximum number of iterations of the optimization algorithm.

- class_weightdict or ‘balanced’, default=None

Weights associated with classes in the form

{class_label: weight}. If not given, all classes are supposed to have weight one.The “balanced” mode uses the values of y to automatically adjust weights inversely proportional to class frequencies in the input data as

n_samples / (n_classes * np.bincount(y)).Note that these weights will be multiplied with sample_weight (passed through the fit method) if sample_weight is specified.

Added in version 0.17: class_weight == ‘balanced’

- n_jobsint, default=None

Number of CPU cores used during the cross-validation loop.

Nonemeans 1 unless in ajoblib.parallel_backendcontext.-1means using all processors. See Glossary for more details.- verboseint, default=0

For the ‘liblinear’, ‘sag’ and ‘lbfgs’ solvers set verbose to any positive number for verbosity.

- refitbool, default=True

If set to True, the scores are averaged across all folds, and the coefs and the C that corresponds to the best score is taken, and a final refit is done using these parameters. Otherwise the coefs, intercepts and C that correspond to the best scores across folds are averaged.

- intercept_scalingfloat, default=1

Useful only when the solver

liblinearis used andself.fit_interceptis set toTrue. In this case,xbecomes[x, self.intercept_scaling], i.e. a “synthetic” feature with constant value equal tointercept_scalingis appended to the instance vector. The intercept becomesintercept_scaling * synthetic_feature_weight.Note

The synthetic feature weight is subject to L1 or L2 regularization as all other features. To lessen the effect of regularization on synthetic feature weight (and therefore on the intercept)

intercept_scalinghas to be increased.- random_stateint, RandomState instance, default=None

Used when

solver='sag', ‘saga’ or ‘liblinear’ to shuffle the data. Note that this only applies to the solver and not the cross-validation generator. See Glossary for details.- use_legacy_attributesbool, default=True

If True, use legacy values for attributes:

C_is an ndarray of shape (n_classes,) with the same value repeatedl1_ratio_is an ndarray of shape (n_classes,) with the same value repeatedcoefs_paths_is a dict with class labels as keys and ndarrays as valuesscores_is a dict with class labels as keys and ndarrays as valuesn_iter_is an ndarray of shape (1, n_folds, n_cs) or similar

If False, use new values for attributes:

C_is a floatl1_ratio_is a floatcoefs_paths_is an ndarray of shape (n_folds, n_l1_ratios, n_cs, n_classes, n_features) For binary problems (n_classes=2), the 2nd last dimension is 1.scores_is an ndarray of shape (n_folds, n_l1_ratios, n_cs)n_iter_is an ndarray of shape (n_folds, n_l1_ratios, n_cs)

Changed in version 1.10: The default will change from True to False in version 1.10.

Deprecated since version 1.10:

use_legacy_attributeswill be deprecated in version 1.10 and be removed in 1.12.

- Attributes:

- classes_ndarray of shape (n_classes, )

A list of class labels known to the classifier.

- coef_ndarray of shape (1, n_features) or (n_classes, n_features)

Coefficient of the features in the decision function.

coef_is of shape (1, n_features) when the given problem is binary.- intercept_ndarray of shape (1,) or (n_classes,)

Intercept (a.k.a. bias) added to the decision function.

If

fit_interceptis set to False, the intercept is set to zero.intercept_is of shape (1,) when the problem is binary.- Cs_ndarray of shape (n_cs)

Array of C i.e. inverse of regularization parameter values used for cross-validation.

- l1_ratios_ndarray of shape (n_l1_ratios)

Array of l1_ratios used for cross-validation. If l1_ratios=None is used (i.e. penalty is not ‘elasticnet’), this is set to

[None]- coefs_paths_dict of ndarray of shape (n_folds, n_cs, n_dof) or (n_folds, n_cs, n_l1_ratios, n_dof)

A dict with classes as the keys, and the path of coefficients obtained during cross-validating across each fold (

n_folds) and then across each Cs (n_cs). The size of the coefficients is the number of degrees of freedom (n_dof), i.e. without interceptn_dof=n_featuresand with interceptn_dof=n_features+1. Ifpenalty='elasticnet', there is an additional dimension for the number of l1_ratio values (n_l1_ratios), which gives a shape of(n_folds, n_cs, n_l1_ratios_, n_dof). See also parameteruse_legacy_attributes.- scores_dict

A dict with classes as the keys, and the values as the grid of scores obtained during cross-validating each fold. The same score is repeated across all classes. Each dict value has shape

(n_folds, n_cs)or(n_folds, n_cs, n_l1_ratios)ifpenalty='elasticnet'. See also parameteruse_legacy_attributes.- C_ndarray of shape (n_classes,) or (1,)

The value of C that maps to the best score, repeated n_classes times. If refit is set to False, the best C is the average of the C’s that correspond to the best score for each fold.

C_is of shape (1,) when the problem is binary. See also parameteruse_legacy_attributes.- l1_ratio_ndarray of shape (n_classes,) or (n_classes - 1,)

The value of l1_ratio that maps to the best score, repeated n_classes times. If refit is set to False, the best l1_ratio is the average of the l1_ratio’s that correspond to the best score for each fold.

l1_ratio_is of shape (1,) when the problem is binary. See also parameteruse_legacy_attributes.- n_iter_ndarray of shape (1, n_folds, n_cs) or (1, n_folds, n_cs, n_l1_ratios)

Actual number of iterations for all classes, folds and Cs. If

penalty='elasticnet', the shape is(1, n_folds, n_cs, n_l1_ratios). See also parameteruse_legacy_attributes.- n_features_in_int

Number of features seen during fit.

Added in version 0.24.

- feature_names_in_ndarray of shape (

n_features_in_,) Names of features seen during fit. Defined only when

Xhas feature names that are all strings.Added in version 1.0.

See also

LogisticRegressionLogistic regression without tuning the hyperparameter

C.

Examples

>>> from sklearn.datasets import load_iris >>> from sklearn.linear_model import LogisticRegressionCV >>> X, y = load_iris(return_X_y=True) >>> clf = LogisticRegressionCV( ... cv=5, random_state=0, use_legacy_attributes=False, l1_ratios=(0,) ... ).fit(X, y) >>> clf.predict(X[:2, :]) array([0, 0]) >>> clf.predict_proba(X[:2, :]).shape (2, 3) >>> clf.score(X, y) 0.98...

- decision_function(X)[source]#

Predict confidence scores for samples.

The confidence score for a sample is proportional to the signed distance of that sample to the hyperplane.

- Parameters:

- X{array-like, sparse matrix} of shape (n_samples, n_features)

The data matrix for which we want to get the confidence scores.

- Returns:

- scoresndarray of shape (n_samples,) or (n_samples, n_classes)

Confidence scores per

(n_samples, n_classes)combination. In the binary case, confidence score forself.classes_[1]where >0 means this class would be predicted.

- densify()[source]#

Convert coefficient matrix to dense array format.

Converts the

coef_member (back) to a numpy.ndarray. This is the default format ofcoef_and is required for fitting, so calling this method is only required on models that have previously been sparsified; otherwise, it is a no-op.- Returns:

- self

Fitted estimator.

- fit(X, y, sample_weight=None, **params)[source]#

Fit the model according to the given training data.

- Parameters:

- X{array-like, sparse matrix} of shape (n_samples, n_features)

Training vector, where

n_samplesis the number of samples andn_featuresis the number of features.- yarray-like of shape (n_samples,)

Target vector relative to X.

- sample_weightarray-like of shape (n_samples,) default=None

Array of weights that are assigned to individual samples. If not provided, then each sample is given unit weight.

- **paramsdict

Parameters to pass to the underlying splitter and scorer.

Added in version 1.4.

- Returns:

- selfobject

Fitted LogisticRegressionCV estimator.

- get_metadata_routing()[source]#

Get metadata routing of this object.

Please check User Guide on how the routing mechanism works.

Added in version 1.4.

- Returns:

- routingMetadataRouter

A

MetadataRouterencapsulating routing information.

- get_params(deep=True)[source]#

Get parameters for this estimator.

- Parameters:

- deepbool, default=True

If True, will return the parameters for this estimator and contained subobjects that are estimators.

- Returns:

- paramsdict

Parameter names mapped to their values.

- predict(X)[source]#

Predict class labels for samples in X.

- Parameters:

- X{array-like, sparse matrix} of shape (n_samples, n_features)

The data matrix for which we want to get the predictions.

- Returns:

- y_predndarray of shape (n_samples,)

Vector containing the class labels for each sample.

- predict_log_proba(X)[source]#

Predict logarithm of probability estimates.

The returned estimates for all classes are ordered by the label of classes.

- Parameters:

- Xarray-like of shape (n_samples, n_features)

Vector to be scored, where

n_samplesis the number of samples andn_featuresis the number of features.

- Returns:

- Tarray-like of shape (n_samples, n_classes)

Returns the log-probability of the sample for each class in the model, where classes are ordered as they are in

self.classes_.

- predict_proba(X)[source]#

Probability estimates.

The returned estimates for all classes are ordered by the label of classes.

For a multiclass / multinomial problem the softmax function is used to find the predicted probability of each class.

- Parameters:

- Xarray-like of shape (n_samples, n_features)

Vector to be scored, where

n_samplesis the number of samples andn_featuresis the number of features.

- Returns:

- Tarray-like of shape (n_samples, n_classes)

Returns the probability of the sample for each class in the model, where classes are ordered as they are in

self.classes_.

- score(X, y, sample_weight=None, **score_params)[source]#

Score using the

scoringoption on the given test data and labels.- Parameters:

- Xarray-like of shape (n_samples, n_features)

Test samples.

- yarray-like of shape (n_samples,)

True labels for X.

- sample_weightarray-like of shape (n_samples,), default=None

Sample weights.

- **score_paramsdict

Parameters to pass to the

scoremethod of the underlying scorer.Added in version 1.4.

- Returns:

- scorefloat

Score of self.predict(X) w.r.t. y.

- set_fit_request(*, sample_weight: bool | None | str = '$UNCHANGED$') LogisticRegressionCV[source]#

Configure whether metadata should be requested to be passed to the

fitmethod.Note that this method is only relevant when this estimator is used as a sub-estimator within a meta-estimator and metadata routing is enabled with

enable_metadata_routing=True(seesklearn.set_config). Please check the User Guide on how the routing mechanism works.The options for each parameter are:

True: metadata is requested, and passed tofitif provided. The request is ignored if metadata is not provided.False: metadata is not requested and the meta-estimator will not pass it tofit.None: metadata is not requested, and the meta-estimator will raise an error if the user provides it.str: metadata should be passed to the meta-estimator with this given alias instead of the original name.

The default (

sklearn.utils.metadata_routing.UNCHANGED) retains the existing request. This allows you to change the request for some parameters and not others.Added in version 1.3.

- Parameters:

- sample_weightstr, True, False, or None, default=sklearn.utils.metadata_routing.UNCHANGED

Metadata routing for

sample_weightparameter infit.

- Returns:

- selfobject

The updated object.

- set_params(**params)[source]#

Set the parameters of this estimator.

The method works on simple estimators as well as on nested objects (such as

Pipeline). The latter have parameters of the form<component>__<parameter>so that it’s possible to update each component of a nested object.- Parameters:

- **paramsdict

Estimator parameters.

- Returns:

- selfestimator instance

Estimator instance.

- set_score_request(*, sample_weight: bool | None | str = '$UNCHANGED$') LogisticRegressionCV[source]#

Configure whether metadata should be requested to be passed to the

scoremethod.Note that this method is only relevant when this estimator is used as a sub-estimator within a meta-estimator and metadata routing is enabled with

enable_metadata_routing=True(seesklearn.set_config). Please check the User Guide on how the routing mechanism works.The options for each parameter are:

True: metadata is requested, and passed toscoreif provided. The request is ignored if metadata is not provided.False: metadata is not requested and the meta-estimator will not pass it toscore.None: metadata is not requested, and the meta-estimator will raise an error if the user provides it.str: metadata should be passed to the meta-estimator with this given alias instead of the original name.

The default (

sklearn.utils.metadata_routing.UNCHANGED) retains the existing request. This allows you to change the request for some parameters and not others.Added in version 1.3.

- Parameters:

- sample_weightstr, True, False, or None, default=sklearn.utils.metadata_routing.UNCHANGED

Metadata routing for

sample_weightparameter inscore.

- Returns:

- selfobject

The updated object.

- sparsify()[source]#

Convert coefficient matrix to sparse format.

Converts the

coef_member to a scipy.sparse matrix, which for L1-regularized models can be much more memory- and storage-efficient than the usual numpy.ndarray representation.The

intercept_member is not converted.- Returns:

- self

Fitted estimator.

Notes

For non-sparse models, i.e. when there are not many zeros in

coef_, this may actually increase memory usage, so use this method with care. A rule of thumb is that the number of zero elements, which can be computed with(coef_ == 0).sum(), must be more than 50% for this to provide significant benefits.After calling this method, further fitting with the partial_fit method (if any) will not work until you call densify.