sklearn.ensemble.RandomTreesEmbedding¶

- class sklearn.ensemble.RandomTreesEmbedding(n_estimators=10, max_depth=5, min_samples_split=2, min_samples_leaf=1, min_weight_fraction_leaf=0.0, max_leaf_nodes=None, sparse_output=True, n_jobs=1, random_state=None, verbose=0, warm_start=False)[source]¶

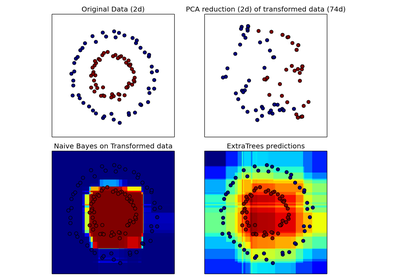

An ensemble of totally random trees.

An unsupervised transformation of a dataset to a high-dimensional sparse representation. A datapoint is coded according to which leaf of each tree it is sorted into. Using a one-hot encoding of the leaves, this leads to a binary coding with as many ones as there are trees in the forest.

The dimensionality of the resulting representation is n_out <= n_estimators * max_leaf_nodes. If max_leaf_nodes == None, the number of leaf nodes is at most n_estimators * 2 ** max_depth.

Parameters: n_estimators : int

Number of trees in the forest.

max_depth : int

The maximum depth of each tree. If None, then nodes are expanded until all leaves are pure or until all leaves contain less than min_samples_split samples. Ignored if max_leaf_nodes is not None.

min_samples_split : integer, optional (default=2)

The minimum number of samples required to split an internal node.

min_samples_leaf : integer, optional (default=1)

The minimum number of samples in newly created leaves. A split is discarded if after the split, one of the leaves would contain less then min_samples_leaf samples.

min_weight_fraction_leaf : float, optional (default=0.)

The minimum weighted fraction of the input samples required to be at a leaf node.

max_leaf_nodes : int or None, optional (default=None)

Grow trees with max_leaf_nodes in best-first fashion. Best nodes are defined as relative reduction in impurity. If None then unlimited number of leaf nodes. If not None then max_depth will be ignored.

sparse_output : bool, optional (default=True)

Whether or not to return a sparse CSR matrix, as default behavior, or to return a dense array compatible with dense pipeline operators.

n_jobs : integer, optional (default=1)

The number of jobs to run in parallel for both fit and predict. If -1, then the number of jobs is set to the number of cores.

random_state : int, RandomState instance or None, optional (default=None)

If int, random_state is the seed used by the random number generator; If RandomState instance, random_state is the random number generator; If None, the random number generator is the RandomState instance used by np.random.

verbose : int, optional (default=0)

Controls the verbosity of the tree building process.

warm_start : bool, optional (default=False)

When set to True, reuse the solution of the previous call to fit and add more estimators to the ensemble, otherwise, just fit a whole new forest.

Attributes: estimators_ : list of DecisionTreeClassifier

The collection of fitted sub-estimators.

References

[R136] P. Geurts, D. Ernst., and L. Wehenkel, “Extremely randomized trees”, Machine Learning, 63(1), 3-42, 2006. [R137] Moosmann, F. and Triggs, B. and Jurie, F. “Fast discriminative visual codebooks using randomized clustering forests” NIPS 2007 Methods

apply(X) Apply trees in the forest to X, return leaf indices. fit(X[, y, sample_weight]) Fit estimator. fit_transform(X[, y, sample_weight]) Fit estimator and transform dataset. get_params([deep]) Get parameters for this estimator. set_params(**params) Set the parameters of this estimator. transform(X) Transform dataset. - __init__(n_estimators=10, max_depth=5, min_samples_split=2, min_samples_leaf=1, min_weight_fraction_leaf=0.0, max_leaf_nodes=None, sparse_output=True, n_jobs=1, random_state=None, verbose=0, warm_start=False)[source]¶

- apply(X)[source]¶

Apply trees in the forest to X, return leaf indices.

Parameters: X : array-like or sparse matrix, shape = [n_samples, n_features]

The input samples. Internally, it will be converted to dtype=np.float32 and if a sparse matrix is provided to a sparse csr_matrix.

Returns: X_leaves : array_like, shape = [n_samples, n_estimators]

For each datapoint x in X and for each tree in the forest, return the index of the leaf x ends up in.

- feature_importances_¶

- Return the feature importances (the higher, the more important the

- feature).

Returns: feature_importances_ : array, shape = [n_features]

- fit(X, y=None, sample_weight=None)[source]¶

Fit estimator.

Parameters: X : array-like or sparse matrix, shape=(n_samples, n_features)

The input samples. Use dtype=np.float32 for maximum efficiency. Sparse matrices are also supported, use sparse csc_matrix for maximum efficiency.

Returns: self : object

Returns self.

- fit_transform(X, y=None, sample_weight=None)[source]¶

Fit estimator and transform dataset.

Parameters: X : array-like or sparse matrix, shape=(n_samples, n_features)

Input data used to build forests. Use dtype=np.float32 for maximum efficiency.

Returns: X_transformed : sparse matrix, shape=(n_samples, n_out)

Transformed dataset.

- get_params(deep=True)[source]¶

Get parameters for this estimator.

Parameters: deep: boolean, optional :

If True, will return the parameters for this estimator and contained subobjects that are estimators.

Returns: params : mapping of string to any

Parameter names mapped to their values.

- set_params(**params)[source]¶

Set the parameters of this estimator.

The method works on simple estimators as well as on nested objects (such as pipelines). The former have parameters of the form <component>__<parameter> so that it’s possible to update each component of a nested object.

Returns: self :

- transform(X)[source]¶

Transform dataset.

Parameters: X : array-like or sparse matrix, shape=(n_samples, n_features)

Input data to be transformed. Use dtype=np.float32 for maximum efficiency. Sparse matrices are also supported, use sparse csr_matrix for maximum efficiency.

Returns: X_transformed : sparse matrix, shape=(n_samples, n_out)

Transformed dataset.