sklearn.ensemble.BaggingRegressor¶

- class sklearn.ensemble.BaggingRegressor(base_estimator=None, n_estimators=10, *, max_samples=1.0, max_features=1.0, bootstrap=True, bootstrap_features=False, oob_score=False, warm_start=False, n_jobs=None, random_state=None, verbose=0)[source]¶

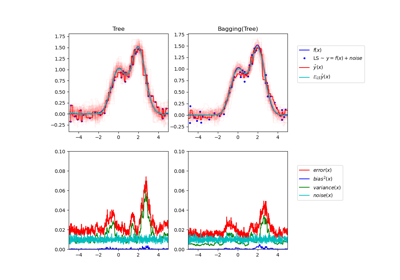

A Bagging regressor.

A Bagging regressor is an ensemble meta-estimator that fits base regressors each on random subsets of the original dataset and then aggregate their individual predictions (either by voting or by averaging) to form a final prediction. Such a meta-estimator can typically be used as a way to reduce the variance of a black-box estimator (e.g., a decision tree), by introducing randomization into its construction procedure and then making an ensemble out of it.

This algorithm encompasses several works from the literature. When random subsets of the dataset are drawn as random subsets of the samples, then this algorithm is known as Pasting [1]. If samples are drawn with replacement, then the method is known as Bagging [2]. When random subsets of the dataset are drawn as random subsets of the features, then the method is known as Random Subspaces [3]. Finally, when base estimators are built on subsets of both samples and features, then the method is known as Random Patches [4].

Read more in the User Guide.

New in version 0.15.

- Parameters

- base_estimatorobject, default=None

The base estimator to fit on random subsets of the dataset. If None, then the base estimator is a

DecisionTreeRegressor.- n_estimatorsint, default=10

The number of base estimators in the ensemble.

- max_samplesint or float, default=1.0

The number of samples to draw from X to train each base estimator (with replacement by default, see

bootstrapfor more details).If int, then draw

max_samplessamples.If float, then draw

max_samples * X.shape[0]samples.

- max_featuresint or float, default=1.0

The number of features to draw from X to train each base estimator ( without replacement by default, see

bootstrap_featuresfor more details).If int, then draw

max_featuresfeatures.If float, then draw

max_features * X.shape[1]features.

- bootstrapbool, default=True

Whether samples are drawn with replacement. If False, sampling without replacement is performed.

- bootstrap_featuresbool, default=False

Whether features are drawn with replacement.

- oob_scorebool, default=False

Whether to use out-of-bag samples to estimate the generalization error. Only available if bootstrap=True.

- warm_startbool, default=False

When set to True, reuse the solution of the previous call to fit and add more estimators to the ensemble, otherwise, just fit a whole new ensemble. See the Glossary.

- n_jobsint, default=None

The number of jobs to run in parallel for both

fitandpredict.Nonemeans 1 unless in ajoblib.parallel_backendcontext.-1means using all processors. See Glossary for more details.- random_stateint, RandomState instance or None, default=None

Controls the random resampling of the original dataset (sample wise and feature wise). If the base estimator accepts a

random_stateattribute, a different seed is generated for each instance in the ensemble. Pass an int for reproducible output across multiple function calls. See Glossary.- verboseint, default=0

Controls the verbosity when fitting and predicting.

- Attributes

- base_estimator_estimator

The base estimator from which the ensemble is grown.

n_features_intDEPRECATED: Attribute

n_features_was deprecated in version 1.0 and will be removed in 1.2.- n_features_in_int

Number of features seen during fit.

New in version 0.24.

- feature_names_in_ndarray of shape (

n_features_in_,) Names of features seen during fit. Defined only when

Xhas feature names that are all strings.New in version 1.0.

- estimators_list of estimators

The collection of fitted sub-estimators.

estimators_samples_list of arraysThe subset of drawn samples for each base estimator.

- estimators_features_list of arrays

The subset of drawn features for each base estimator.

- oob_score_float

Score of the training dataset obtained using an out-of-bag estimate. This attribute exists only when

oob_scoreis True.- oob_prediction_ndarray of shape (n_samples,)

Prediction computed with out-of-bag estimate on the training set. If n_estimators is small it might be possible that a data point was never left out during the bootstrap. In this case,

oob_prediction_might contain NaN. This attribute exists only whenoob_scoreis True.

See also

BaggingClassifierA Bagging classifier.

References

- 1

L. Breiman, “Pasting small votes for classification in large databases and on-line”, Machine Learning, 36(1), 85-103, 1999.

- 2

L. Breiman, “Bagging predictors”, Machine Learning, 24(2), 123-140, 1996.

- 3

T. Ho, “The random subspace method for constructing decision forests”, Pattern Analysis and Machine Intelligence, 20(8), 832-844, 1998.

- 4

G. Louppe and P. Geurts, “Ensembles on Random Patches”, Machine Learning and Knowledge Discovery in Databases, 346-361, 2012.

Examples

>>> from sklearn.svm import SVR >>> from sklearn.ensemble import BaggingRegressor >>> from sklearn.datasets import make_regression >>> X, y = make_regression(n_samples=100, n_features=4, ... n_informative=2, n_targets=1, ... random_state=0, shuffle=False) >>> regr = BaggingRegressor(base_estimator=SVR(), ... n_estimators=10, random_state=0).fit(X, y) >>> regr.predict([[0, 0, 0, 0]]) array([-2.8720...])

Methods

fit(X, y[, sample_weight])Build a Bagging ensemble of estimators from the training set (X, y).

get_params([deep])Get parameters for this estimator.

predict(X)Predict regression target for X.

score(X, y[, sample_weight])Return the coefficient of determination of the prediction.

set_params(**params)Set the parameters of this estimator.

- property estimators_samples_¶

The subset of drawn samples for each base estimator.

Returns a dynamically generated list of indices identifying the samples used for fitting each member of the ensemble, i.e., the in-bag samples.

Note: the list is re-created at each call to the property in order to reduce the object memory footprint by not storing the sampling data. Thus fetching the property may be slower than expected.

- fit(X, y, sample_weight=None)[source]¶

Build a Bagging ensemble of estimators from the training set (X, y).

- Parameters

- X{array-like, sparse matrix} of shape (n_samples, n_features)

The training input samples. Sparse matrices are accepted only if they are supported by the base estimator.

- yarray-like of shape (n_samples,)

The target values (class labels in classification, real numbers in regression).

- sample_weightarray-like of shape (n_samples,), default=None

Sample weights. If None, then samples are equally weighted. Note that this is supported only if the base estimator supports sample weighting.

- Returns

- selfobject

Fitted estimator.

- get_params(deep=True)[source]¶

Get parameters for this estimator.

- Parameters

- deepbool, default=True

If True, will return the parameters for this estimator and contained subobjects that are estimators.

- Returns

- paramsdict

Parameter names mapped to their values.

- property n_features_¶

DEPRECATED: Attribute

n_features_was deprecated in version 1.0 and will be removed in 1.2. Usen_features_in_instead.

- predict(X)[source]¶

Predict regression target for X.

The predicted regression target of an input sample is computed as the mean predicted regression targets of the estimators in the ensemble.

- Parameters

- X{array-like, sparse matrix} of shape (n_samples, n_features)

The training input samples. Sparse matrices are accepted only if they are supported by the base estimator.

- Returns

- yndarray of shape (n_samples,)

The predicted values.

- score(X, y, sample_weight=None)[source]¶

Return the coefficient of determination of the prediction.

The coefficient of determination \(R^2\) is defined as \((1 - \frac{u}{v})\), where \(u\) is the residual sum of squares

((y_true - y_pred)** 2).sum()and \(v\) is the total sum of squares((y_true - y_true.mean()) ** 2).sum(). The best possible score is 1.0 and it can be negative (because the model can be arbitrarily worse). A constant model that always predicts the expected value ofy, disregarding the input features, would get a \(R^2\) score of 0.0.- Parameters

- Xarray-like of shape (n_samples, n_features)

Test samples. For some estimators this may be a precomputed kernel matrix or a list of generic objects instead with shape

(n_samples, n_samples_fitted), wheren_samples_fittedis the number of samples used in the fitting for the estimator.- yarray-like of shape (n_samples,) or (n_samples, n_outputs)

True values for

X.- sample_weightarray-like of shape (n_samples,), default=None

Sample weights.

- Returns

- scorefloat

\(R^2\) of

self.predict(X)wrt.y.

Notes

The \(R^2\) score used when calling

scoreon a regressor usesmultioutput='uniform_average'from version 0.23 to keep consistent with default value ofr2_score. This influences thescoremethod of all the multioutput regressors (except forMultiOutputRegressor).

- set_params(**params)[source]¶

Set the parameters of this estimator.

The method works on simple estimators as well as on nested objects (such as

Pipeline). The latter have parameters of the form<component>__<parameter>so that it’s possible to update each component of a nested object.- Parameters

- **paramsdict

Estimator parameters.

- Returns

- selfestimator instance

Estimator instance.